Published on September 13, 2024 7:00 PM GMT

With Lakera's strides in securing LLM APIs, Goodfire AI's path to scaling interpretability, and 20+ model evaluations startups among much else, there's a rising number of technical startups attempting to secure the model ecosystem.

Of course, they have varying levels of impact on superintelligence containment and security and even with these companies, there's a lot of potential for aligned, ambitious and high-impact startups within the ecosystem. This point isn't new and has been made in our previous posts and by Eric Ho (Goodfire AI CEO).

To set the stage, our belief is that these are the types of companies that will have a positive impact:

- Startups with a profit incentive completely aligned with improving AI safety;that have a deep technical background to shape AGI deployment and;do not try to compete with AGI labs.

Piloting AI safety startups

To understand impactful technical AI safety startups better, Apart Research joined forces with collaborators from Juniper Ventures, vectorview (alumni from the latest YC cohort), Rudolf (from the upcoming def/acc cohort), Tangentic AI, and others. We then invited researchers, engineers, and students to resolve a key question “can we come up with ideas that scale AI safety into impactful for-profits?”

The hackathon took place during a weekend two weeks ago with a keynote by Esben Kran (co-director of Apart) along with ‘HackTalks’ by Rudolf Laine (def/acc) and Lukas Petersson (YC / vectorview). Individual submissions were a 4 page report with the problem statement, why this solution will work, what the key risks of said solution are, and any experiments or demonstrations of the solution the team made.

This post details the top 6 projects and excludes 2 projects that were made private by request (hopefully turning into impactful startups now!). In total, we had ? 101 signups and ? 11 final entries. Winners were decided by an LME model conditioned on reviewer bias. Watch the authors' lightning talks here.

Dark Forest: Making the web more trustworthy with third-party content verification

By Mustafa Yasir (AI for Cyber Defense Research Centre, Alan Turing Institute)

Abstract: ‘DarkForest is a pioneering Human Content Verification System (HCVS) designed to safeguard the authenticity of online spaces in the face of increasing AI-generated content. By leveraging graph-based reinforcement learning and blockchain technology, DarkForest proposes a novel approach to safeguarding the authentic and humane web. We aim to become the vanguard in the arms race between AI-generated content and human-centric online spaces.’

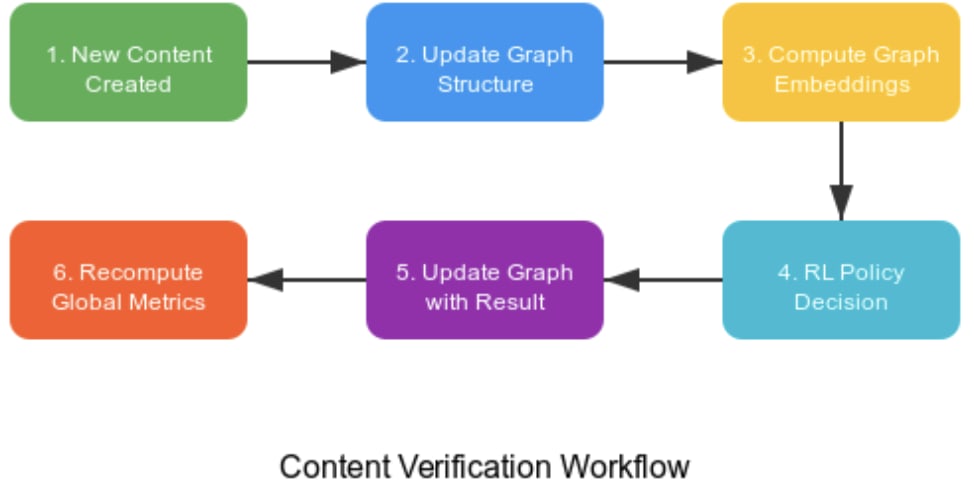

Content verification workflow supported by graph-based RL agents deciding verifications

Reviewer comments:

Natalia: Well explained problem with clear need addressed. I love that you included the content creation process - although you don't explicitly address how you would attract content creators to use your platform over others in their process. Perhaps exploring what features of platforms drive creators to each might help you make a compelling case for using yours beyond the verification capabilities. I would have also liked to see more details on how the verification decision is made and how accurate this is on existing datasets.

Nick: There's a lot of valuable stuff in here regarding content moderation and identity verification. I'd narrow it to one problem-solution pair (e.g., "jobs to be done") and focus more on risks around early product validation (deep interviews with a range of potential users and buyers regarding value) and go-to-market. It might also be worth checking out Musubi.

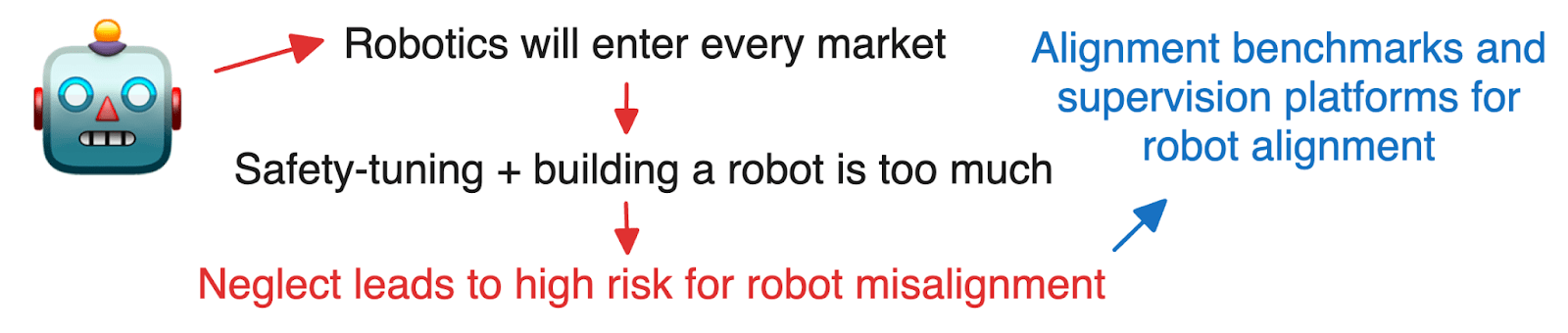

Simulation Operators: An annotation operation for alignment of robot

By Ardy Haroen (USC)

Abstract: ‘We bet on agentic AI being integrated into other domains within the next few years: healthcare, manufacturing, automotive, etc., and the way it would be integrated is into cyber-physical systems, which are systems that integrate the computer brain into a physical receptor/actuator (e.g. robots). As the demand for cyber-physical agents increases, so would be the need to train and align them. We also bet on the scenario where frontier AI and robotics labs would not be able to handle all of the demands for training and aligning those agents, especially in specific domains, therefore leaving opportunities for other players to fulfill the requirements for training those agents: providing a dataset of scenarios to fine-tune the agents and providing the people to give feedback to the model for alignment. Furthermore, we also bet that human intervention would still be required to supervise deployed agents, as demanded by various regulations. Therefore leaving opportunities to develop supervision platforms which might highly differ between different industries.’

Esben: Interesting proposal! I imagine the median fine-tuning capability for robotics at labs is significantly worse than LLMs. At the same time, it's also that much more important. I know OpenAI uses external contractors for much of their red-teaming work and it seems plausible that companies would bring in an organization like you describe to reduce the workload on internal staff. If I ran a robotics company however, I would count it as one of my key assets to be good at training that robot as well and you will probably have to show a significant performance increase to warrant your involvement in their work. I'm a fan of alignment companies spawning and robotics seems to become a pivotal field in the future. I also love the demand section outlining the various jobs it would support / displace.

Natalia: Really interesting business model! Good job incorporating new regulation into your thinking and thinking through the problem/proposed solution and its implications. However, I am curious about what would differentiate your proposed solution relative to existing RLHF service providers (even if currently mostly not applied to robotics).

AI Safety Collective: Crowdsourcing solutions to critical corporate AI safety challenges

By Lye Jia Jun (Singapore Management University), Dhruba Patra (Indian Association for the Cultivation of Science), Philipp Blandfort

Abstract: 'The AI Safety Collective is a global platform designed to enhance AI safety by crowdsourcing solutions to critical AI Safety challenges. As AI systems like large language models and multimodal systems become more prevalent, ensuring their safety is increasingly difficult. This platform will allow AI companies to post safety challenges, offering bounties for solutions. AI Safety experts and enthusiasts worldwide can contribute, earning rewards for their efforts. The project focuses initially on non-catastrophic risks to attract a wide range of participants, with plans to expand into more complex areas. Key risks, such as quality control and safety, will be managed through peer review and risk assessment. Overall, The AI Safety Collective aims to drive innovation, accountability, and collaboration in the field of AI safety.'

Minh (shortened): This is a great idea, similar to what I launched 2 years ago: super-linear.org. Your assessments are mostly accurate. Initially, getting postings isn't too difficult - people often post informal prizes/bounties across EA channels. However, you'll quickly reach a cap due to the small size of the EA/alignment community and the even smaller subset of bounties. Small ad-hoc prizes don't scale well. Manifund's approach of crowdfunding larger AI Safety projects is more effective, as these attract more effort and activity. Feel free to contact me or Manifund for more information. While their focus differs slightly, many lessons would apply. Meanwhile, here's a list of thousands of AI Safety/alignment bounty ideas I've compiled. Also check out Neel Nanda's open mech interp problems doc.

Esben: Pretty great idea, echoing a lot of our thoughts from the Apart Sprints as well! There are still a few issues with a bounty platform like this that would need to be resolved, e.g. 1) which problems that require little work from me and can be given to others without revealing trade information would actually be high enough value to get solved while not causing security risks to my company and 2) how will this platform compete with places like AICrowd and Kaggle (I'd assume this would be mostly based on the AI safety focus). At the same time, there's some nice side benefits, such as the platform basically exposing predicted incidents for vulnerabilities at AI companies (though again, dependent on companies actually sharing this). Cool project and great to see many of your ideas in the live demo from your presentation!

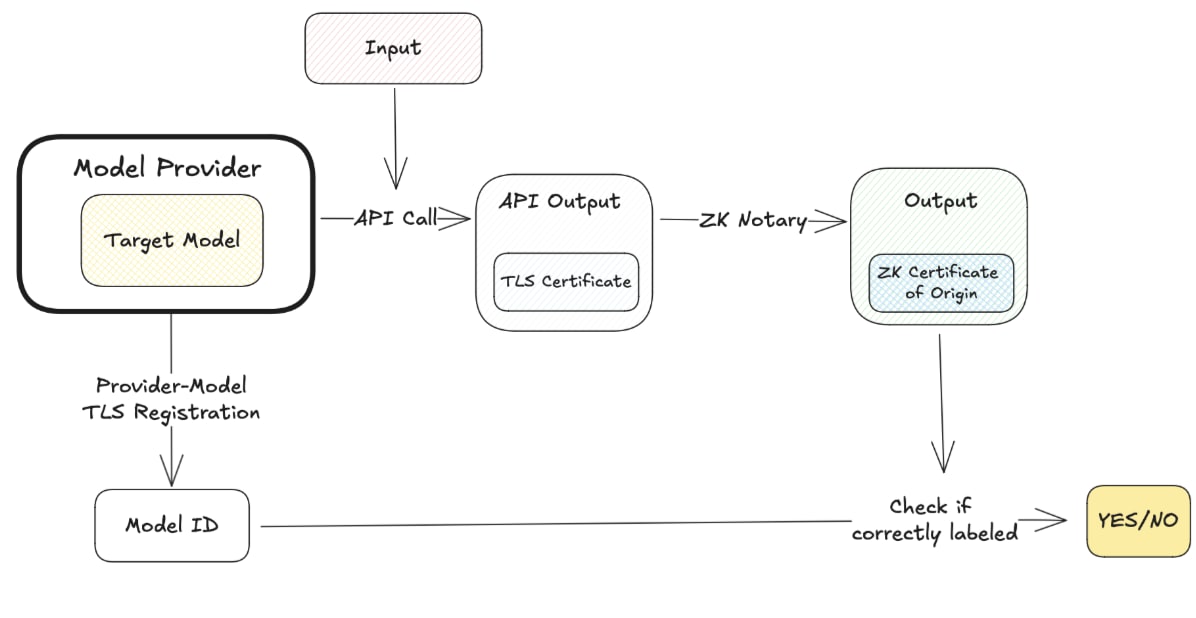

Identity System for AIs: Re-tracing AI agent actions

By Artem Grigor (UCL)

Abstract: 'This project proposes a cryptographic system for assigning unique identities to AI models and verifying their outputs to ensure accountability and traceability. By leveraging these techniques, we address the risks of AI misuse and untraceable actions. Our solution aims to enhance AI safety and establish a foundation for transparent and responsible AI deployment.'

Esben: What a great project! This is a clear solution to a very big problem. It seems like the product would need both providers and output-dependent platforms to be in on the work here, creating an incentive to mark your models in the first place. I would also be curious to understand if a malicious actor wouldn't be able to simply forge the IDs and proofs for a specific output? In general, it would be nice to hear about more limitations of this method, simply due to my somewhat limited cryptography background. It would also be interesting to see exactly where it would be useful, e.g. how exactly can something like Facebook use it to combat fake news? Otherwise, super nice project and clearly showcases a technical solution to an obvious and big problem while remaining commercially viable as well.

Honorable mentions

Besides the two projects that were kept private, several other projects deserve honorable mentions. We also suggest you check out the rest of the projects.

ÆLIGN: Aligned agent-based Workflows via collaboration & safety protocols

ÆLIGN is a multi-agent orchestration tool for reliable / safe agent interaction. It specifies communication protocol designs and monitoring systems to standardize and control agent behavior. As Minh mentions, the report undersells the value of this idea and the demo is a good representation for this idea. Read the full project here.

WELMA: Open-world environments for Language Model agents

WELMA details a sandbox tool to build environments for testing language models and takes much of the challenges paradigm of METR and AISI's evals and thinks about how it might look at the next stage. Read the full project here.

What evidence is this for impactful AI safety startups?

So, was our pilot for AI safety startups successful? We knew beforehand that the startup ideas wouldn't be a be-all-end-all solution to a specific problem but that safety and security is a complex question where a single problem (e.g. the chance of escape from rogue superintelligences) can give a single company work for years.

With that said, we're cautiously optimistic about the impact companies might have during the next years in AI safety. This update we see from both participants and our networks is relatively well summarized by our participant Mustafa Yasir (AI for Cyber Defense Research Centre):

“[The AI safety startups hackathon] completely changed my idea of what working on 'AI Safety' means, especially from a for-profit entrepreneurial perspective. I went in with very little idea of how a startup can be a means to tackle AI Safety and left with incredibly exciting ideas to work on. This is the first hackathon in which I've kept thinking about my idea, even after the hackathon ended.”

And we can only agree.

If you are interested in joining future hackathons, find the schedule here.

Thank you to Nick Fitz, Rudolf Laine, Lukas Petersson, Fazl Barez, Jonas Vollmer, Minh Nguyen, Archana Vaidheesvaran, Natalia Pérez-Campanero Antolin, Finn Metz, and Jason Hoelscher-Obermaier for making this event a success.

Discuss