Computer vision competitions are currently an important event for teams working in this area. They provide an opportunity to try new solutions, practice, and simply enjoy solving interesting problems.

We have previously described on the BLOG@CACM our participation in the VOTS Challenge in 2023, and the appearance of our solution on the leaderboard in 2024.

That same year, we developed a rather interesting solution that showed decent results; but at the last moment, due to technical problems, we lost the trained model and did not have time to fix everything before the deadline. Therefore, we will probably talk about this solution in a future post.

That same year, since the basic solution that we had been making for half a year was not ready, we had to come up with something quickly, and at the same time, overcome the quality threshold. We want to tell readers about such an experience in this post.

Multimodal Models Qwen2.5-VL

To solve the problem, it was decided to use an unusual approach based on multimodal LLMs. They can potentially solve the problem without any additions at all. Among the multimodal LLMs, some of the most famous are the Qwen series models. In particular, we took Qwen2.5-VL.

It can work with texts, images, and videos. This model exists in four variants: 3B; 7B; 32B; and 72B. The authors do not recommend using more than 24,000 tokens per input. In our work, we used the 7B variant because even without quantization it fits on one A6000 48GB video card. The 32B version requires two such GPUs, and the 72B version requires four. There are also quantized versions, but there was no need to use them, since they are the same in speed, and there was enough memory.

Experiment 1: Working with Videos

What if, given an object (or its name) and the entire video, we ask the LLM to build a track of it? After all, the LLM must understand the context well when the entire video is available at once.

On the first frame of the video, we know the object to search for; so, first we simply ask Qwen what the object in the picture is and, knowing its name, we can make a request to the entire video. We experimented with the first validation video (Fig. 1). This video contains the “black and white cat.”

The number of tokens needed for a video is calculated using the formula as follows:

tokens = (frames / 2) * (height / 28) * (width / 28)

The original video is available in 1280×720 resolution and 1542 frames. If you use the video as a whole without changes, to analyze it, you will need:

tokens = 46 * 26 * 771 = 922116 tokens

This is significantly higher than the 24,000 limit. Therefore, it is necessary to either reduce the resolution several times or use fewer frames. If you do not change the resolution, it will be safe to use 30-40 frames. We tried using 30 frames.

We asked Qwen to describe each version of the video:

Query: ‘Describe this video’

Reply by Qwen-7B: The video features a black and white cat standing near the rear tire of a vehicle. The cat appears to be looking up, possibly at the camera or something above it. The setting seems to be an outdoor area with some debris on the ground, suggesting it might be a less maintained or industrial location. The tire is large and appears to belong to a truck or a similar heavy vehicle. The background includes parts of the vehicle’s body and some scattered objects on the ground, indicating that the area might be used for storage or maintenance purposes. The overall atmosphere of the video is casual and candid, capturing a moment in the life of the cat in its environment.

We gave the answer for version 7B. All four versions did well, although the answer from 3B contained hallucinations. That is, we learned that the neural network understands well what exactly is happening in the video. And it may be able to build a track for the object we need. Next, we asked to build a track for each frame.

Query: ‘Find black and white cat in every frame of the video and print it in the form ‘: [x1, y1, x2, y2], : [x1, y1, x2, y2], … for all 30 frames in json format.’

But such a request, unfortunately, does not work.

Answer Qwen-32B: json [{“bbox_2d”: [94, 0, 925, 672], “label”: “black and white cat”}]

Answer Qwen-72B: [

{“0”: [94, 287, 465, 727], “1”: [94, 287, 465, 727], “2”: [94, 287, 465, 727], “3”: [94, 287, 465, 727], “4”: [94, 287, 465, 727], “5”: [94, 287, 465, 727], “6”: [94, 287, 465, 727], “7”: [94, 287, 465, 727], “8”: [94, 287, 465, 727], “9”: [94, 287, 465, 727], “10”: [94, 287, 465, 727], “11”: [94, 287, 465, 727], “12”: [94, 287, 465, 727], “13”: [94, 287, 465, 727], “14”: [94, 287, 465, 727], “15”: [94, 287, 465, 727], “16”: [94, 287, 465, 727], “17”: [94, 287, 465, 727], “18”: [94, 287, 465, 727], “19”: [94, 287, 465, 727], “20”: [94, 287, 465, 727], “21”: [94, 287, 465, 727], “22”: [94, 287, 465, 727], “23”: [94, 287, 465, 727], “24”: [94, 287, 465, 727], “25”: [94, 287, 465, 727], “26”: [94, 287, 465, 727], “27”: [94, 287, 465, 727], “28”: [94, 287, 465, 727], “29”: [94, 287, 465, 727]

]

That is, the answer is only for one frame. We tried several different similar queries, but there were no correct answers for the track.

For the large version, the answer turned out to be more similar to the truth, but all the numbers are the same.

It is quite possible that there were simply no similar tasks in the training dataset.

We found out that the neural network can solve problems of this type:

Query: ‘Localize the location of the cat in the video, output the start and end timestamp for each event, and describe each cat location with bbox_2d. Provide the result in json format with ‘mm:ss.ff’ format for the time depiction.’

Answer Qwen-7B: json

[ { "start_time": "00:00.00", "end_time": "00:17.40", "description": "A black and white cat is walking in front of a truck tire." }, { "start_time": "00:17.40", "end_time": "00:35.80", "description": "The cat stops and looks at the camera." }]Thus, it is probably possible to train Qwen 2.5 to solve the video tracking problem, but this requires either the initial Qwen model to be trained on similar problems, or one can try to train it by oneself, for example, on the TAO dataset [see Dave, A., Khurana, T., Tokmakov, P., Schmid, C., & Ramanan, D. (2020). Tao: A large-scale benchmark for tracking any object. In Computer Vision–ECCV 2020: 16th European Conference, Glasgow, UK, August 23–28, 2020, Proceedings, Part V 16 (pp. 436–454). Springer International Publishing.], etc.

Experiment 2: Working with Individual Images

Since it was impossible to work with the video directly, the idea of searching for a given object in separate images was tested.

First, an attempt was made to use two images and a question.

Variant 1: Two full-size images are fed to the input. In the first one, the sought object was marked with a red rectangle.

"content": [ { "type": "image", "image": image_reference_path, }, { "type": "image", "image": image_search_path, }, { "type": "text", "text": "Target object is located inside the red rectangle on [Image 1]. Can you find this target object on [Image 2]? Report the bbox coordinates of the target object on [Image 2] in JSON format." },],Variant 2: Only the cut image of the desired object and the current frame from the video are fed to the input.

"content": [ { "type": "image", "image": image_reference_path, }, { "type": "image", "image": image_search_path, }, {"type": "text", "text": "Find object from [Image 1] on [Image 2] near bbox with coordinates {}. Report coordinates of object in JSON format.".format(previous_cooridnates[0])},],Both variants quite often fail, and Qwen (for some reason) returns nothing.

Variant 3: Therefore, it was decided to split the query into two. Only for the very first frame, it was decided to find out the short name of the object and then search for this object on each frame of the video. We used the following queries for the tests:

LLM_TASK_V01 = "Describe an object located in a red rectangle in 2-3 words."LLM_TASK_V02 = "Describe an object located in a red rectangle in 2-3 words. Make attention to color, shape and other characteristics which make this object unique for this picture. Don't use verbs in description. Don't mention the red rectangle."Then, on all the subsequent frames, we searched for the objects according to the text description:

search_text = f"Find object '{object_name}' near bbox with coordinates {previous_cooridnates[0]}. Report coordinates of object in JSON format."For cases where there are many objects, we immediately asked Qwen to return the one that is closest to the object in the last processed frame.

The last 3rd variant works stably.

Since the conditions of the task require that the object mask be returned, traditionally the Segment Anything Model (SAM) of the first or second version is used for this purpose. But it would be good to get rid of launching the second model and ask Qwen to return the polygon right away. Unfortunately, this is not possible either.

It seems that the training set did not contain requests for searching polygons. In this case, theoretically, it is possible to further train Qwen to search for polygons by selecting the appropriate image datasets for the instance segmentation task.

Example query to find a polygon: “text”: “I gave you 2 images. In the first image, the object is marked in red rectangle. Can you find this object in the second image? Please print the contour of the object in the form of a polygon [x1, y1, x2, y2, …, xN, yN] as a python list. If there is no such object on the 2nd image, then the output is [-1, -1].”

In the end, it was impossible not to use SAM 2.

Experimental Results

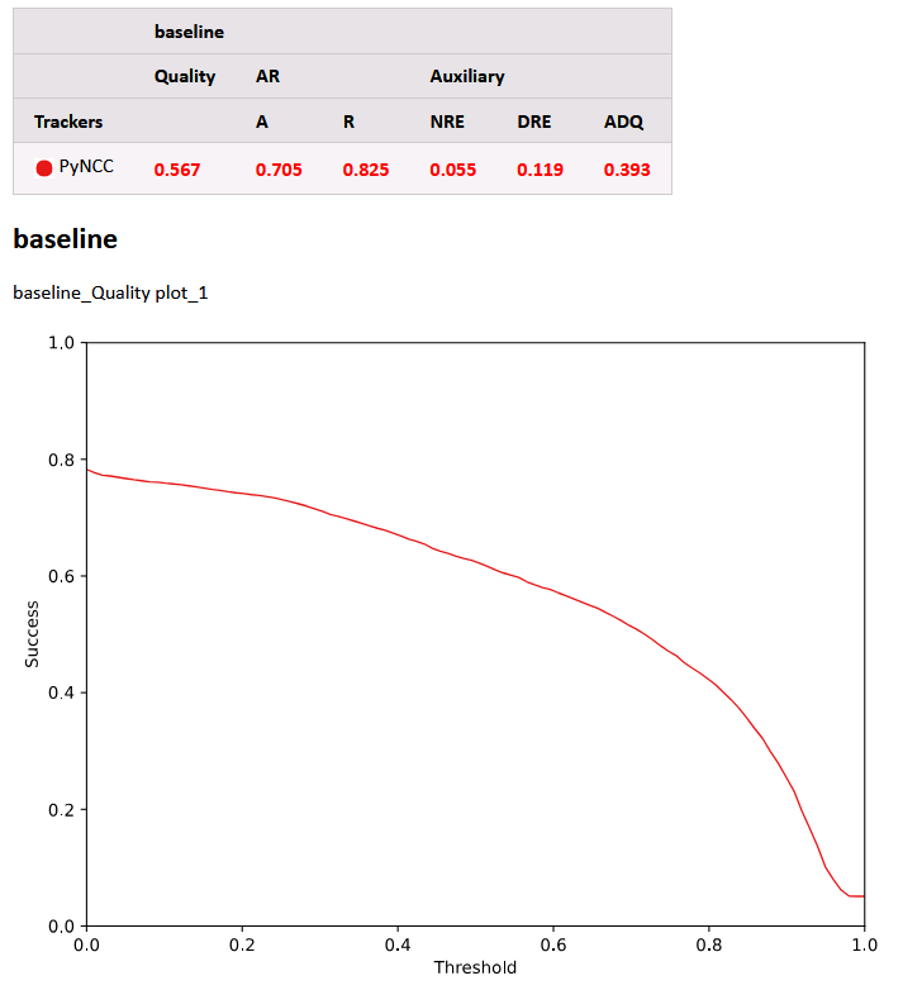

The experiments were mainly carried out on the Qwen version 7B model with a good balance of quality/speed of work. The results on the validation videos are given below.

For test videos on LB, we got the metric Quality = 0.37. Quite a large gap is explained by the nature of validation videos. They rarely contain identical objects. This method copes well with the videos where the desired object is one and different from the others. For videos with a large number of identical objects, the method does not work the best and, apparently, requires additional improvements.

Conclusion

In general, the proposed method proved itself successful; especially considering that the solution was obtained in a very limited time. Multimodal LLMs proved their effectiveness and versatility, so they can be used to quickly create various solutions with good quality.

For practical use of our tracker, the original model can be modified by the additional training as follows:

1) to immediately predict a track for each frame for a given input video;

2) to predict not a box, but a polygon for the desired object to get rid of the SAM model;

3) to search for an object by the reference specified in the second image (not by the text description);

4) to search more accurately for the object closest to the specified one.

Perhaps, it makes sense to add tracking methods that use predictions for all the frames processed (not just for the last frame before the specified one as is done in our current version).

The main conclusion from our experience is that participating in Computer Vision competitions is fun (we knew that in advance), and even if everything doesn’t go as expected, some multimodal models will come to the rescue, thanks to which one can get a good solution (a new result) in a short period of time.

Aleksandr Romanov is the professor and head of CAD Laboratory of HSE University, Moscow, Russia.

Roman Solovyev is a Chief Researcher in IPPM RAS, Moscow, Russia.