Cut your QA cycles down from hours to minutes with automated testing (Sponsored)

If slow QA processes bottleneck you or your software engineering team and you’re releasing slower because of it — you need to check out QA Wolf.

They get engineering teams to 80% automated end-to-end test coverage and helps them ship 5x faster by reducing QA cycles from hours to minutes.

QA Wolf takes testing off your plate. They can get you:

✔️ Unlimited parallel test runs

✔️ 24-hour maintenance and on-demand test creation

✔️ Human-verified bug reports sent directly to your team

✔️ Zero flakes, guaranteed

The result? Drata’s team of 80+ engineers achieved 4x more test cases and 86% faster QA cycles.

Disclaimer: The details in this post have been derived from the LinkedIn Engineering Blog. All credit for the technical details goes to the LinkedIn engineering team. The links to the original articles are present in the references section at the end of the post. We’ve attempted to analyze the details and provide our input about them. If you find any inaccuracies or omissions, please leave a comment, and we will do our best to fix them.

LinkedIn uses Apache Kafka, an open-source stream processing platform, as a key part of its infrastructure.

Kafka was first developed at LinkedIn and later open-sourced. Many companies now use Kafka, but LinkedIn uses it on an exceptionally large scale.

LinkedIn uses Kafka for multiple tasks like:

Tracking user activity

Exchanging messages

Collecting metrics.

They have over 100 Kafka clusters with more than 4,000 servers (called brokers), handling over 100,000 topics and 7 million partitions. In total, LinkedIn's Kafka system processes more than 7 trillion messages every day.

Operating Kafka at this huge scale creates challenges in terms of scalability and operability. To tackle these issues, LinkedIn maintains its version of Kafka, specifically tailored for their production needs and scale. This includes LinkedIn-specific release branches that contain patches for their production requirements and feature needs.

In this post, we’ll look at how LinkedIn manages its Kafka releases running in production and how it develops new patches to improve Kafka for the community and internal usage.

LinkedIn’s Kafka Ecosystem

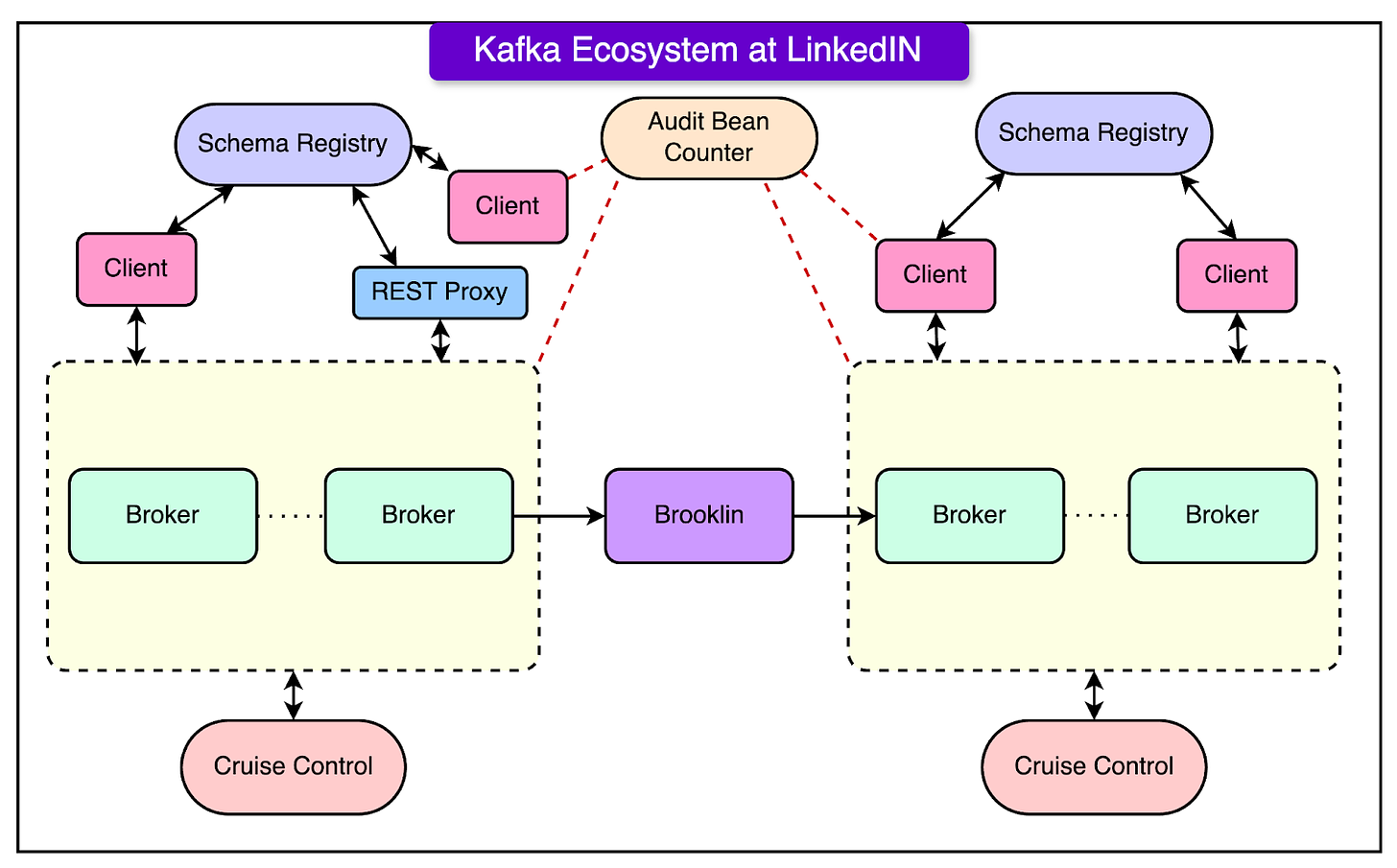

Let us first take a high-level look at LinkedIn’s Kafka ecosystem.

LinkedIn's Kafka ecosystem is a crucial part of its technology stack, enabling it to handle an immense volume of messages - around 7 trillion per day.

The ecosystem consists of several key components that work together to ensure smooth operation and scalability. See the diagram below:

Here are the details about the various components:

Kafka Clusters and Brokers

LinkedIn maintains over 100 Kafka clusters

These clusters consist of more than 4000 brokers

The clusters handle over 100,000 topics and 7 million partitions

Applications with Kafka Clients

Various applications within LinkedIn’s software stack use Kafka clients to interact with the Kafka clusters.

These applications use Kafka for tasks like activity tracking, message exchanges, and metrics gathering

REST Proxy

The REST proxy enables non-Java clients to interact with Kafka

It provides a RESTful interface for producing and consuming messages

Schema Registry

The schema registry is used for maintaining Avro schemas

Avro is a data serialization format used by LinkedIn for its messages

The schema registry ensures data consistency and compatibility between producers and consumers

Brooklin

Brooklin is a tool used for mirroring data between Kafka clusters

It enables the replication of data across different data centers or environments

Cruise Control

Cruise Control is a tool used for cluster maintenance and self-healing

It automatically balances partitions across brokers and handles broker failures

Cruise Control helps ensure optimal performance and resource utilization

Pipeline Audit and Usage Monitoring

LinkedIn uses a pipeline completeness audit to ensure data integrity

They also have a usage monitoring tool called “Bean Counter” to track Kafka usage metrics

All these components work together to form LinkedIn’s robust and scalable Kafka ecosystem.

The ecosystem enables LinkedIn to handle the massive volume of real-time data generated by its users and systems while maintaining high performance and reliability.

LinkedIn engineering team continuously improves and customizes its Kafka deployment to meet specific needs. They also contribute many enhancements back to the open-source Apache Kafka project.

Latest articles

If you’re not a subscriber, here’s what you missed this month.

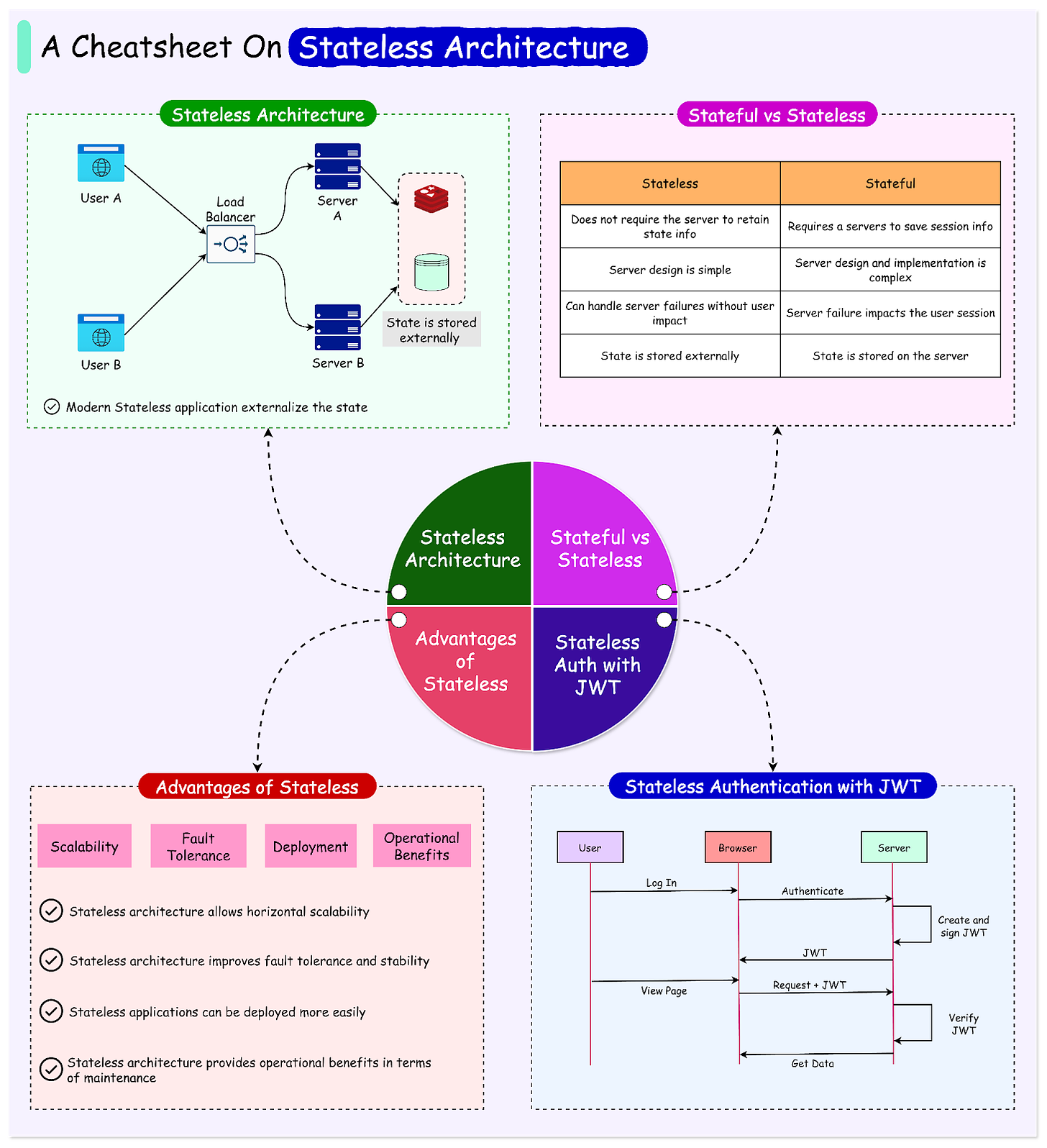

Stateless Architecture: The Key to Building Scalable and Resilient Systems

Distributed Caching: The Secret to High-Performance Applications

Speedrunning Guide: Junior to Staff Engineer in 3 years

To receive all the full articles and support ByteByteGo, consider subscribing:

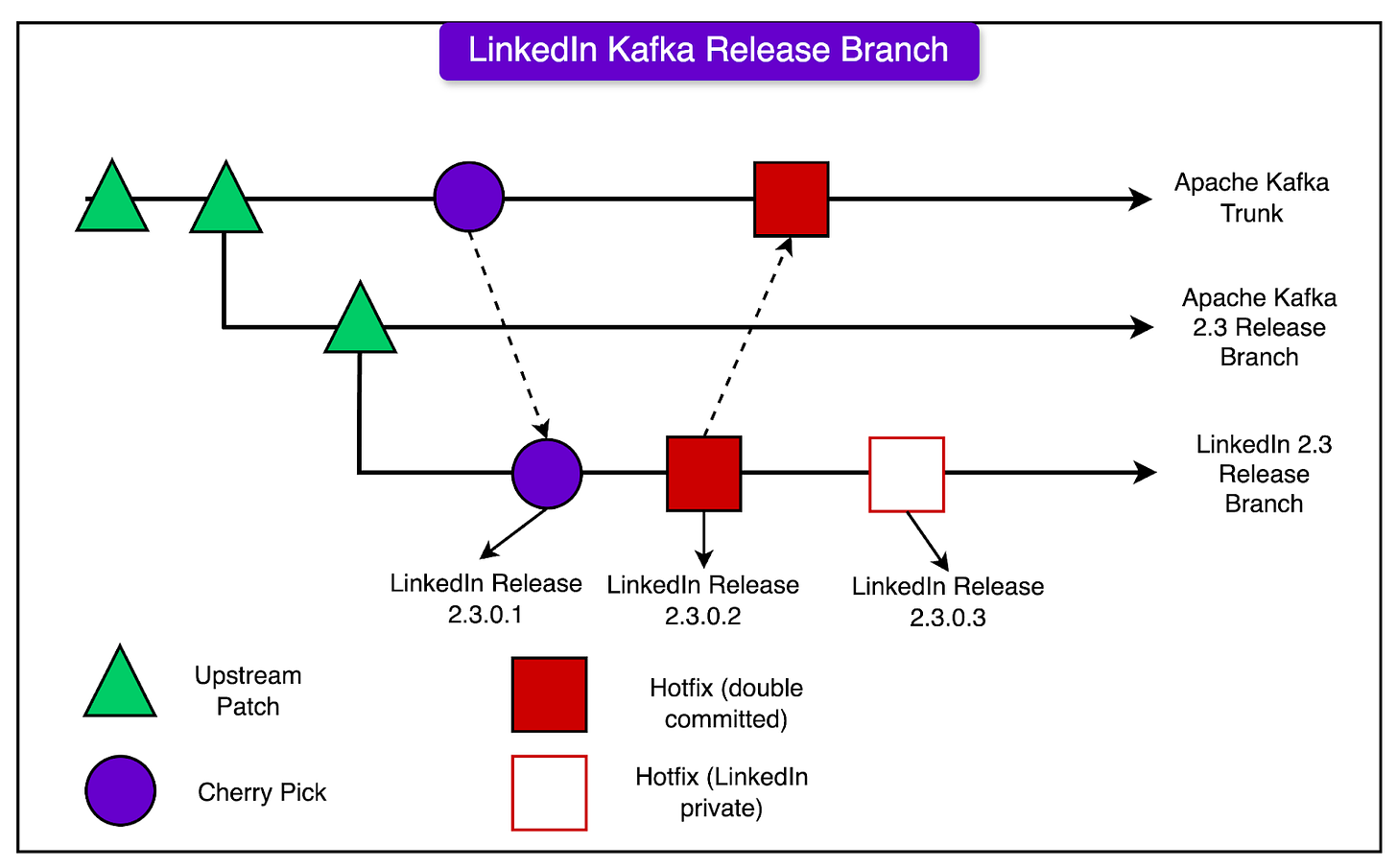

LinkedIn’s Kafka Release Branches

LinkedIn maintains its special versions of Kafka, which are based on the official open-source Apache Kafka releases. These special versions are called LinkedIn Kafka Release Branches.

Each LinkedIn Kafka Release Branch starts from a specific version of Apache Kafka. For example, they might create a branch called “LinkedIn Kafka 2.3.0.x”, which is based on the Apache Kafka 2.3.0 release.

LinkedIn makes changes and adds extra code (called “patches”) to these branches to help Kafka work better for their specific needs. They have two main ways of adding these patches:

Upstream First: This is where they make the change in the official Apache Kafka code first. Then, they bring that change into their LinkedIn branch later. This approach is used for changes that are not super urgent.

LinkedIn First (Hotfix): This is where they make the change in their LinkedIn branch first, to fix an urgent problem quickly. Then, they try to also add this change to the official Apache Kafka code later.

The diagram below shows the approach to managing the releases:

In the LinkedIn Kafka Release Branches, one can find a mix of different types of patches:

Regular Apache Kafka patches from when they created the branch.

Patches that LinkedIn made and added to Apache Kafka first (Upstream First).

Patches for urgent fixes that LinkedIn made in their branch first (LinkedIn First/Hotfix).

Some patches are specific to LinkedIn and are not in the official Apache Kafka code.

When LinkedIn creates a new Kafka Release Branch, it starts from the latest Apache Kafka release. Then, they look at their previous LinkedIn branch and bring over any of their patches that haven’t been added to the official Apache Kafka code yet.

They use special notes in the code changes to keep track of which patches have been added to the official release and which are still just in the LinkedIn version. Also, they regularly check the Apache Kafka code and bring in new changes to keep their branch up to date.

Finally, they perform intensive testing of their new LinkedIn Kafka Release Branch. They test it with real data and usage to ensure that it works well and performs fast, before using it for real work at LinkedIn.

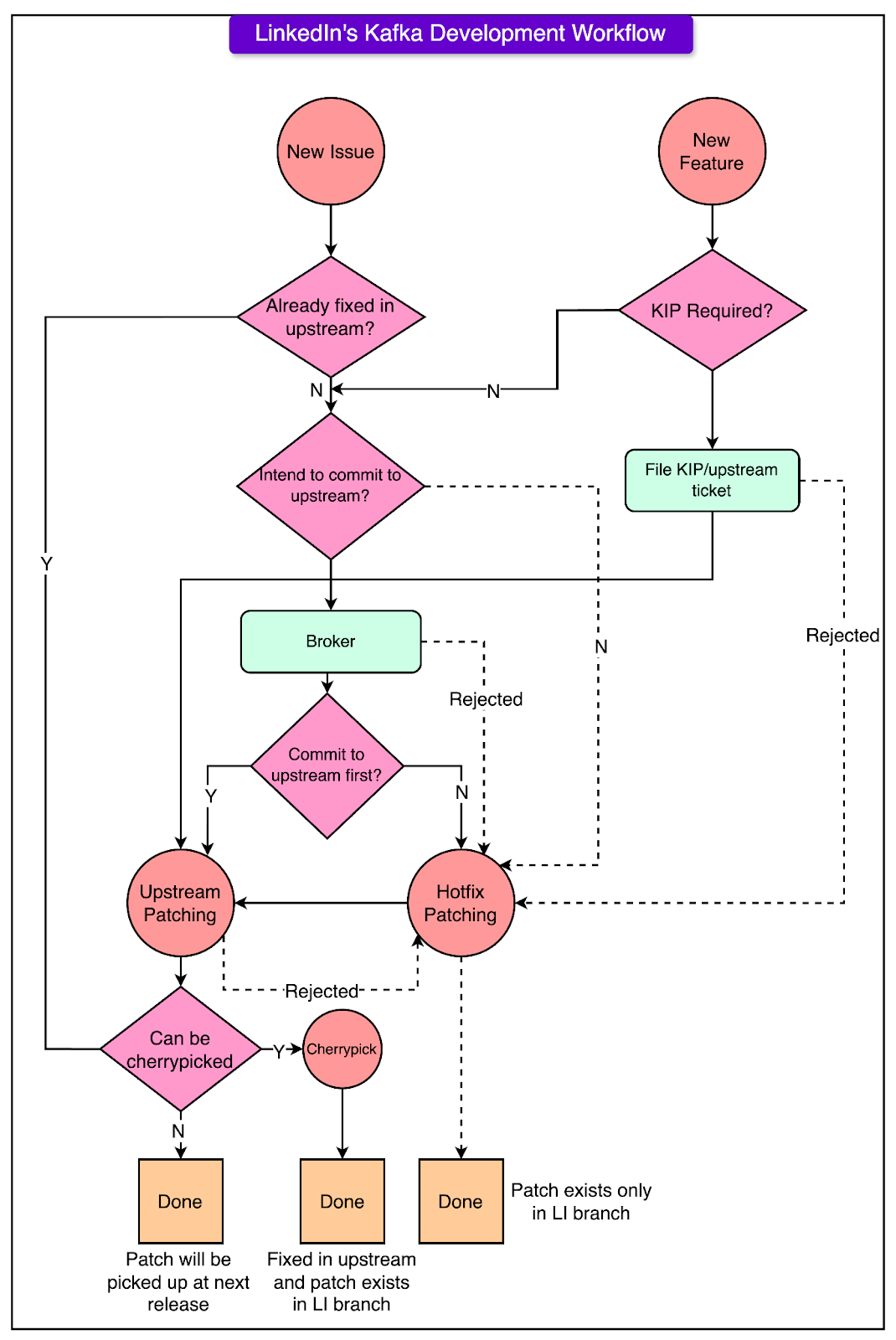

Kafka Development Workflow at LinkedIn

When LinkedIn engineers want to make a change or add a new feature to Kafka, they first have to decide whether to make the change in the official Apache Kafka code (called "upstream-first") or to make the change in LinkedIn's version of Kafka first (called "LinkedIn-first" or "hotfix approach").

Here’s what the decision-making process looks like on a high level:

To make this decision, they think about how urgent the change is.

If it's a change that needs to happen very quickly to fix a problem that's happening in LinkedIn's production systems, they'll usually do it LinkedIn-first. This way, they can get the fix into LinkedIn's version of Kafka and deploy it to their servers as fast as possible.

However, if the change is something that can wait a bit longer, like a week or so, and it's not too big of a change, they'll try to do it upstream first. This means they make the change in the official Apache Kafka code, and then later bring that change into LinkedIn's version.

For new features that have been approved through the Kafka Improvement Proposal (KIP) process, they always try to do these upstream first. This is because new features usually aren't as urgent as fixing production issues, and it's good to contribute new features to the main Apache Kafka project so the wider community can benefit from them.

The diagram below shows the entire flow in more detail.

So in summary, the decision between upstream-first and LinkedIn-first depends on factors like:

The urgency of the change. For example, production fixes are more urgent.

The time it would take to get the change into the official Apache Kafka Code. Quicker is better for upstream first.

Whether it's a new feature or a bug fix. New features usually go upstream first

Patch Examples from LinkedIn

LinkedIn has made several changes (called “patches) to Kafka to help it work better for their specific needs. These patches fall into a few main categories:

Scalability Improvements

LinkedIn has some very large Kafka clusters, with over 140 brokers and millions of data copies in a single cluster.

With clusters this big, they sometimes have problems with the central control server being slow or running out of memory. Also, many times brokers take a long time to start up or shut down.

To fix these problems, LinkedIn made patches to:

Reduce the amount of memory the controller uses, by reusing certain objects and avoiding unnecessary logging.

Speed up broker startup and shutdown, by reducing conflicts when multiple processes try to access the same data. This is also known as lock contention.

Operational Improvements

Sometimes, LinkedIn needs to remove brokers from a cluster and add new brokers. When they remove a broker, they want to make sure all the data on that broker is copied to other brokers first, so no data is lost.

However, this was hard to do, because even while they were trying to move data off a broker, new data was constantly being added to it.

To solve this, they created a new mode called “maintenance mode” for brokers. When a broker is in maintenance mode, no new data is added to it. This makes it much easier to move all the data off the broker before shutting it down.

New Features for Apache Kafka

LinkedIn has added several brand new features to their version of Kafka such as:

Keeping track of how much data each user is producing and consuming, so they can bill them accordingly.

Enforcing a minimum number of data copies when creating new topics, to reduce the risk of data loss if a broker fails.

A new way to reset the position in a topic that a consumer is reading from, to the closest valid position.

The LinkedIn engineering team also contributed many improvements directly to the Apache Kafka project, so everyone can benefit from them.

Some major examples include:

Improving how Kafka handles quotas (limits on resource usage).

Adding ways for Kafka to detect and handle outdated control messages and brokers that have been offline.

Separating the communication channels for control messages and data messages.

Adding a way to limit how far behind the compaction process can fall. For reference, compaction is a process that removes old duplicate data.

Conclusion

To summarize, LinkedIn customizes Kafka heavily to handle the immense scale at which it operates.

It also contributes many improvements upstream while maintaining release branches to rapidly address issues. Their development workflow and release branching are designed to balance urgency with contributions going back to the open-source community.

References:

SPONSOR US

Get your product in front of more than 1,000,000 tech professionals.

Our newsletter puts your products and services directly in front of an audience that matters - hundreds of thousands of engineering leaders and senior engineers - who have influence over significant tech decisions and big purchases.

Space Fills Up Fast - Reserve Today

Ad spots typically sell out about 4 weeks in advance. To ensure your ad reaches this influential audience, reserve your space now by emailing sponsorship@bytebytego.com.